How Google Reads Content: Entities, Relationships, and Topic Domains

- account_circle mbahkatob

- calendar_month Jumat, 21 Nov 2025

- visibility 17

- comment 0 komentar

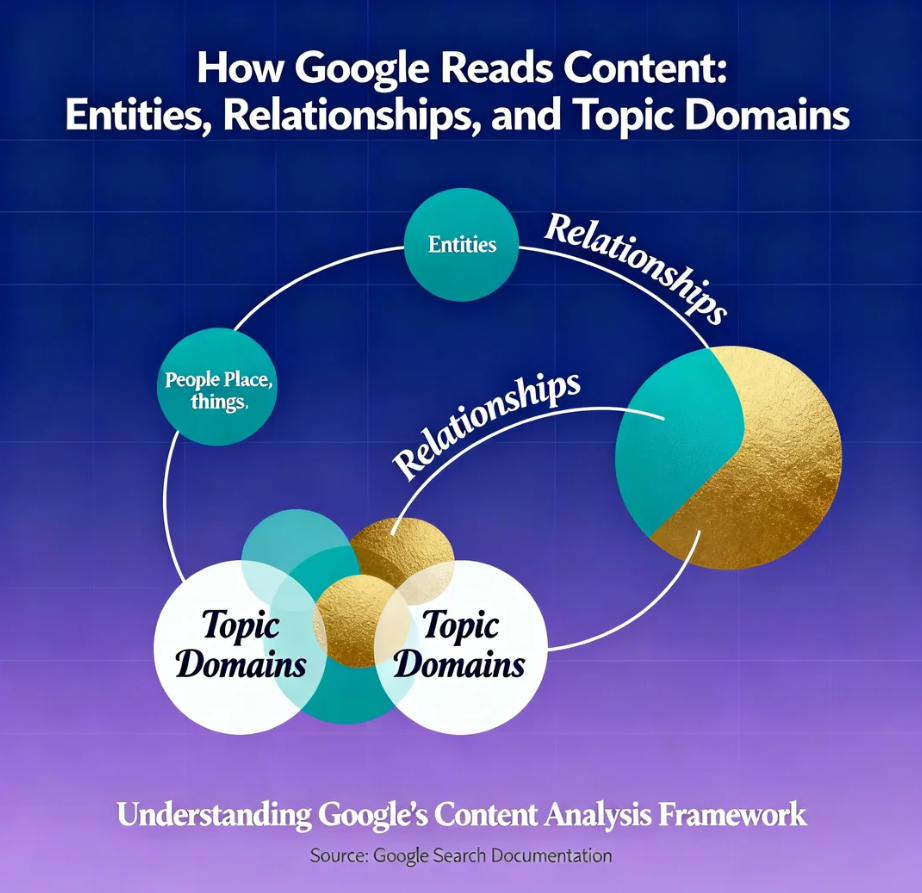

Entity-based SEO is the strategic evolution from optimizing for keywords (strings of text) to optimizing for Entities (distinct, machine-understandable concepts) and the semantic relationships that connect them. In the Neural Search era of 2026, Google does not “read” your content like a human; it parses it like a massive database. It uses advanced Natural Language Processing (NLP) to extract Named Entities, calculate their Salience (relevance score), and map them against its trillion-node Knowledge Graph. If your content is just a bag of keywords without clear entity definitions, it is mathematically invisible to the algorithm.

The Lie: “Google Counts Keywords”

The most persistent and damaging myth in the SEO industry is the concept of “Keyword Density.” For nearly two decades, SEO gurus sold plugins and courses that warned you: “Your target keyword only appears 0.5% of the time. You must increase it to 2.5% to rank.” They taught you to sprinkle your target phrase into the text like seasoning on a steak, believing that repetition equals relevance. They believed that if you shouted “Best Pizza” enough times, Google would eventually believe you.

This is a lie.

It is a relic of the “Lexical Search” era (pre-2013). Back then, search engines were dumb pattern-matching machines. They were glorified library card catalogs. If you searched for “best pizza,” they looked for the document that contained the string of characters “b-e-s-t p-i-z-z-a” the most times in the header and body.

Today, Google’s algorithms (RankBrain, BERT, MUM, and Gemini) utilize Semantic Search. They don’t care how many times you say the word. They care about the meaning behind the word.

-

If you write an article about “Apple” and mention “pie,” “cinnamon,” “oven,” and “crust,” Google knows you mean the fruit.

-

If you write about “Apple” and mention “iPhone,” “Tim Cook,” “Cupertino,” and “iOS,” Google knows you mean the technology giant.

If you are still obsessing over keyword frequency, exact-match headers, and stuffing phrases into alt text, you are optimizing for a 1998 AltaVista crawler. You are feeding a dead algorithm. You are playing checkers while Google is playing 4D Chess.

The Truth: “Things, Not Strings”

Here is the revelation: Google is no longer indexing the web; it is mapping the world.

In 2012, Google introduced the Knowledge Graph with the manifesto: “Things, not strings.”

-

String: A sequence of characters (e.g., “J-a-g-u-a-r”).

-

Thing (Entity): The unique concept. Is it the animal (Entity ID:

/m/04_51)? Is it the car brand (Entity ID:/m/03n4r)? Is it the Fender guitar model?

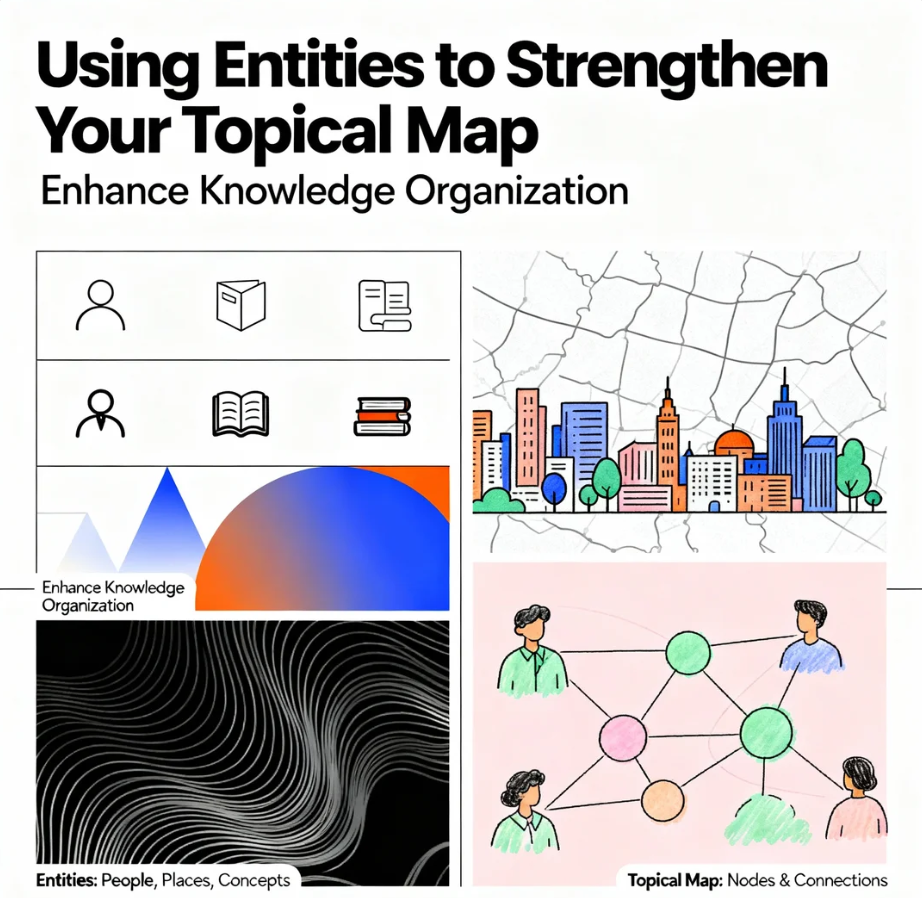

To Google, your website is a document containing candidate entities. Its primary job is Disambiguation. It reads your content to determine exactly which entities you are talking about, how confident it is in that assessment, and how those entities relate to the user’s query intent.

The NLP Parsing Process (How the Bot Thinks)

When Googlebot crawls your page, it doesn’t just “save” the text. It runs it through a complex NLP pipeline:

-

Entity Extraction: It scans the text and identifies nouns (Entities).

-

Categorization: It classifies them into types (Person, Organization, Location, Event, Consumer Good).

-

Salience Scoring: It assigns a score (0.0 to 1.0) to each entity based on how central it is to the text. If “Elon Musk” is mentioned once in a footer, his salience is 0.01. If the entire article is about him, it is 0.99.

-

Sentiment Analysis: It determines if the context around the entity is positive, negative, or neutral.

-

Relationship Mapping: It looks for the “edges” (verbs and prepositions) that connect entities. “[Elon Musk] (Subject) -> [founded] (Predicate) -> [SpaceX] (Object).”

If you write about “Bank” but use words like “River,” “Flow,” and “Water,” Google creates a vector for a “River Bank.” If you use words like “Deposit,” “Interest,” and “Loan,” it creates a vector for a “Financial Institution.” Entity-based SEO is the art of ensuring Google never gets confused about who you are, what you do, and what you are an expert in.

The Protocol: Engineering Semantic Clarity

You must stop writing for word count and start writing for Entity Salience. You need to spoon-feed Google’s NLP API. Follow this protocol to make your content machine-readable, authoritative, and impossible to misunderstand.

Phase 1: The Semantic Triple Strategy (Subject-Predicate-Object)

Machines understand logic in Semantic Triples. A triple consists of a Subject, a Predicate, and an Object. This is the atomic unit of knowledge. Complex, flowery sentences confuse bots. Simple, declarative sentences educate them.

-

The Tactic: Ensure your core definitions and key takeaways use the Subject-Predicate-Object structure.

-

Bad (Flowery/Ambiguous): “When considering the vast array of options for celestial travel and the titans of industry involved, SpaceX often stands out as a luminary in the field…”

-

Bot Analysis: Low confidence. Too much noise. What is the relationship?

-

-

Good (Machine-Readable): “SpaceX (Subject) manufactures (Predicate) reusable rockets (Object).”

-

Bot Analysis: High confidence. Fact extracted. Added to Knowledge Vault.

-

Why this matters: This structure allows Google to easily extract the fact and add it to the Knowledge Vault. If Google can extract facts from your site, you become a candidate for the Knowledge Graph and AI Overviews. You become a “Source of Truth.”

Phase 2: Increasing Entity Salience

You want your main topic (Target Entity) to have a Salience Score of 1.0. Many writers dilute their own relevance. If you write an article about “SEO Strategies,” but you spend 500 words telling a story about your grandmother’s baking recipes to be “relatable,” the Salience of “SEO” drops, and the Salience of “Grandmother” rises. Google categorizes the page incorrectly (e.g., “Cooking” instead of “Marketing”).

The Salience Protocol:

-

Placement: The Main Entity must appear in the first sentence of the H1, the first paragraph, and the conclusion.

-

Frequency (Smart Repetition): You don’t need keyword stuffing, but the entity must be the “protagonist” of the article.

-

Co-Occurrence: Surround your Main Entity with LSI Keywords and related entities found in the Knowledge Graph. If you write about “Batman,” you must mention “Robin,” “Joker,” “Gotham,” and “DC Comics.” These co-occurring entities confirm the context.

Phase 3: Establishing Topic Domains via Attributes

To prove you are an authority on a Topic Domain, you must cover the attributes of the entity, not just the entity itself. A shallow article mentions the entity. An authoritative article maps its attributes.

-

Example Entity: “Tesla Model S.”

-

Required Attributes (The Neural Lattice):

-

Range: “405 miles.”

-

Acceleration: “0-60 in 1.99s.”

-

Price: “$74,990.”

-

Charging: “Supercharger Network.”

-

Manufacturer: “Tesla, Inc.”

-

Execution: If your content misses these attributes, Google’s NLP sees a “partial entity.” It assumes your content is “thin” or “low information gain.” You must conduct GAP Analysis against Wikipedia to ensure you cover every attribute the Knowledge Graph expects for that entity type.

Phase 4: Disambiguation via Structured Data (The Digital Passport)

Don’t leave it to chance. Use Schema.org JSON-LD to explicitly tell Google the Entity ID (URL) of your topic. This is the ultimate “Disambiguation” maneuver.

-

The

sameAsPower Move: In your JSON-LD, link your topic to its Wikipedia, Wikidata, or Google Knowledge Graph entry.JSON{ "@context": "https://schema.org", "@type": "Article", "headline": "The Future of Artificial Intelligence", "about": { "@type": "Thing", "name": "Artificial Intelligence", "sameAs": [ "https://en.wikipedia.org/wiki/Artificial_intelligence", "https://www.wikidata.org/wiki/Q11660" ] }, "mentions": [ { "@type": "Thing", "name": "Machine Learning", "sameAs": "https://en.wikipedia.org/wiki/Machine_learning" } ] }Why this wins: This tells Google: “My article is about the exact same concept as this Wikipedia page.” It creates a hard link in the Knowledge Graph. It removes 100% of ambiguity. Even if your text is vague, your code is precise.

Phase 5: Visual Entity Recognition

In 2026, Google reads images as well as text. Google Lens and Cloud Vision API analyze every image on your site.

-

The Tactic: Do not use generic stock photos. Use images that clearly depict the Entity.

-

The Validation: If you upload your image to Google Cloud Vision API, does it recognize the entity?

-

Image: A photo of a generic laptop. -> Label: “Electronic Device.” (Weak).

-

Image: A clear photo of a MacBook Pro. -> Label: “MacBook Pro,” “Apple.” (Strong).

-

-

Optimization: Ensure your images reinforce the textual entities. If your text says “Eiffel Tower” but your image is the Statue of Liberty, you create an Entity Conflict, damaging trust.

The Call to Dominance

The era of “keyword stuffing” is over. The era of Entity Engineering is here. You can continue to write vague, fluffy content that targets strings of text, hoping to trick a 2010 algorithm that no longer exists. You can watch your rankings vanish as Google’s NLP gets smarter and filters out your noise.

Or, you can master Entity-based SEO. You can write content that speaks the language of the machine. You can define your entities, map their relationships, and present a clear, unambiguous data structure that Google trusts implicitly.

The machine is reading. It is judging your confidence, your salience, and your accuracy. Make sure it understands you. Define the Entity. Own the Topic. Rule the Graph.

Tags: #googlenlp, #entityseo, #knowledgegraph, #topicdomains, #semanticsearch, #namedentityrecognition, #googlealgorithms, #contentrelevance, #semanticrelations, #seotechnology

- Penulis: mbahkatob

Saat ini belum ada komentar