How to Build AI-Friendly Content Architecture (2026 Edition)

- account_circle mbahkatob

- calendar_month Jumat, 21 Nov 2025

- visibility 13

- comment 0 komentar

An AI-readable site structure is the strategic organization of digital assets using semantic HTML, logical hierarchy, and structured data to ensure Large Language Models (LLMs) and search bots can parse, understand, and serve your content with zero friction. In the emerging era of generative search and autonomous agents, your website is no longer just a visual interface for humans; it is a structured database for machines. If your architecture is not robot-friendly, your content is effectively invisible to the engines that matter. This manifesto dismantles the outdated rules of web design and establishes the mandatory protocol for building a digital fortress that speaks the native language of AI.

The Lie: “If It Looks Good to Humans, It Will Rank”

Stop believing the lie that User Experience (UX) is purely visual.

For the last decade, marketers, designers, and business owners have operated under a dangerous delusion. They pour tens of thousands of dollars into sleek animations, parallax scrolling, massive hero images, and JavaScript-heavy interfaces. They obsess over the “human feel” of the website—the fonts, the colors, the emotional resonance of the photography.

This is a fatal error.

In 2026, your primary visitor is not a human. It is an AI Agent.

Before a human ever sees your content, an AI bot—be it Google’s crawler, ChatGPT’s browsing agent, Perplexity’s discovery engine, or an autonomous shopping bot—must visit, parse, and “understand” your site.

Here is the brutal reality: To a machine, your beautiful parallax effect is just heavy code bloat. Your clever, mysterious navigation menu is a labyrinth. Your “creative” layout that ignores grid standards is a chaotic mess of unstructured data.

If your site is a visual masterpiece but a structural disaster—full of <div> soup, broken hierarchies, orphan pages, and uncrawlable links—the AI will treat it as noise. It has a finite “compute budget.” It will not waste valuable processing power trying to decipher your artistic mess. It will simply abort the crawl and move on to your competitor who served the data on a silver platter.

Visual beauty without structural logic is a digital graveyard. You are building a palace that has no doors.

The Truth: Your Website is a Dataset, Not a Brochure

Here is the revelation you need to embed in your brain: To an AI, your website is a training dataset.

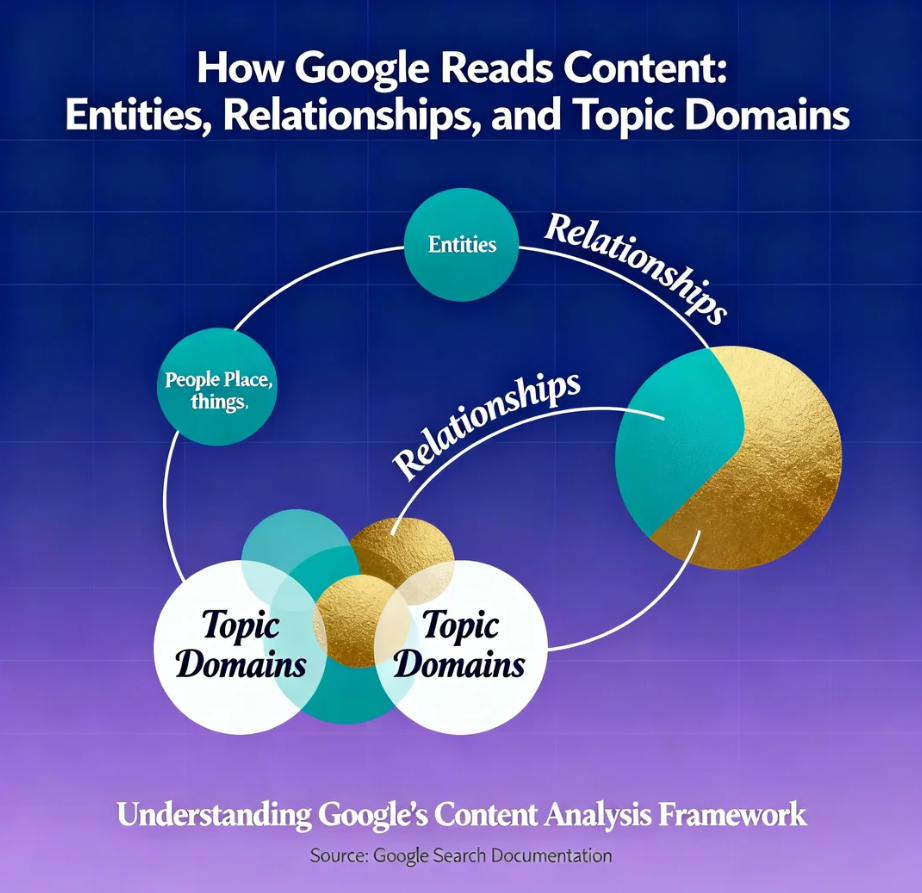

When Google’s AI Overview (SGE) or an LLM scans your site, it isn’t “reading” like a human reads a novel. It is tokenizing text. It is mapping vector relationships between entities. It is calculating the probability that your content satisfies a specific intent query. It is looking for Information Gain—new, unique value that justifies citing you.

-

Old SEO: Optimized for keywords, backlinks, and “human engagement” signals like bounce rate.

-

New SEO: Optimizes for Computational Efficiency, Entity Salience, and Machine Readability.

The winners of 2026 are building Robot-Friendly Architecture. They are proactively reducing the “cognitive load” for machines. They are ensuring that when an AI scrapes their site, it gets clean, structured, machine-readable text that can be instantly synthesized into an answer.

If you are not building for the machine first, you are not building for the future. You are building a legacy artifact that will gather dust in the archives of the internet.

The Protocol: Engineering the Neural Lattice

This is not a suggestion. This is the mandatory architecture for digital sovereignty. If you want to survive the transition to AI-first search, you must rebuild your foundation. Follow this protocol to transform your website from a brochure into a high-efficiency data node.

Phase 1: Semantic HTML (The Skeleton)

The vast majority of websites today are built with what developers call “Div Soup.” They rely on endless nested <div> tags to structure content. To a human eye, a <div> styled as a header looks like a header. But to a bot, a <div> is a generic, meaningless container. It tells the bot nothing about the content inside.

You must switch to Semantic HTML. This gives context to your code. It transforms your layout into a labeled map.

The Semantic Mandate:

-

<header>vs<div>: Do not wrap your logo and menu in a generic div. Use<header>. This tells the AI, “This is the introduction and navigation context for the page.” -

<nav>for Arteries: Use the<nav>tag only for major navigation blocks. Do not use it for footer links or sidebar lists. This signals to the bot, “These links are the primary arteries of the site structure.” -

<main>for the Core: Every page must have one (and only one)<main>element. This is critical. It tells the AI, “Ignore the sidebar, ignore the footer, ignore the ads. THIS is the content you need to index.” -

<article>for Entities: Use<article>for self-contained compositions like blog posts or news items. This signals that the content inside makes sense on its own, independent of the rest of the page. -

<section>for Themes: Use<section>to group thematically related content within an article. Each section should ideally have a heading (<h2>or<h3>), creating a clear outline for the bot to follow. -

<aside>for Noise: Use<aside>for tangential content (sidebars, related posts widgets, ads) that is not critical to the main topic. This explicitly tells the AI to deprioritize this content, ensuring it doesn’t dilute the relevance of your main keyword.

Why this matters: When an LLM parses your code, Semantic HTML acts as a roadmap. It tells the AI: “Pay attention to the <article>, ignore the <aside>, and understand that the <nav> connects these concepts.” This drastically improves crawlability and relevance scoring. It removes the guesswork.

Phase 2: Logical Hierarchy and Siloing (The Brain)

A flat site structure (where every page is one click from the home page) is chaos. A deep, messy structure (where pages are buried 10 clicks deep) is a labyrinth. You need a Logical Hierarchy based on Topic Silos.

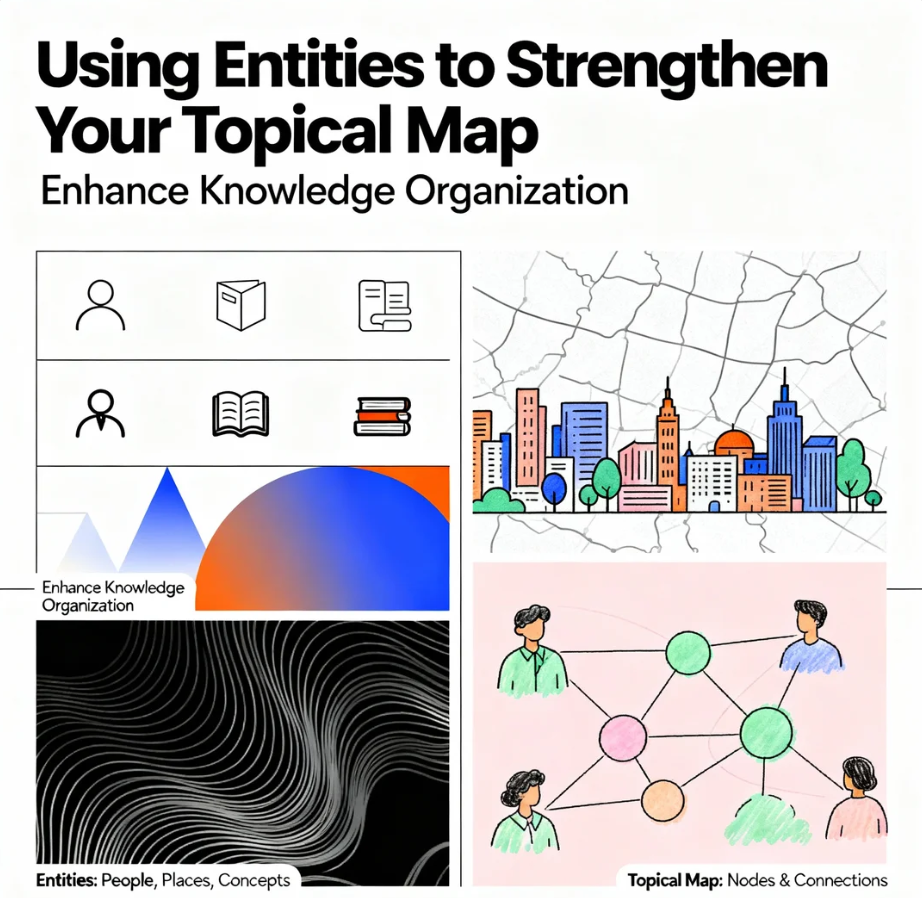

You must stop thinking in terms of “Pages” and start thinking in terms of “Topic Clusters.”

The AI-Readable Structure Model:

| Human View | AI Agent View (The Reality) |

| “Menu Bar” | Topic Cluster Root (The highest authority node that defines the domain’s expertise) |

| “Blog Post” | Entity Node (A specific data point that must link back to the root to establish context) |

| “Related Posts” | Semantic Vector Path (Defines the relationship distance between two entities) |

| “Footer” | Noise (Unless structured with schema to define NAP or Policy data) |

Execution Protocol:

-

The Rule of 3: No important page should be more than 3 clicks from the homepage. AI crawlers have a “crawl budget.” If they have to dig too deep, they assume the content is unimportant.

-

Parent-Child URL Logic: Your URL structures must reflect your hierarchy. This is non-negotiable.

-

Bad (Flat):

domain.com/best-seo-tips -

Bad (Date-Based):

domain.com/2026/01/seo-tips -

Good (Siloed):

domain.com/services/seo/predictive-modeling -

Analysis: The siloed URL tells the AI exactly where this content fits. It says: “This page is about Predictive Modeling, which is a subset of SEO, which is a Service offered by this Entity.”

-

-

Contextual Anchors: Internal links must use descriptive, keyword-rich anchor text. Never use “Click Here” or “Read More.”

-

Weak: “Click here to read about architecture.”

-

Strong: “Learn how to build an AI-readable site structure.”

-

The anchor text is the label the AI uses to understand what is on the other side of the link before it follows it.

-

Phase 3: The Universal Translator (JSON-LD)

If Semantic HTML is the map, JSON-LD structured data is the GPS coordinates. It is not “extra credit.” It is the primary language you use to speak to the machine. It allows you to spoon-feed the AI exactly what your page is about, bypassing the ambiguity of natural language processing.

You cannot rely on Google to “figure out” that your article is a review or a recipe. You must tell it explicitly using the Schema.org vocabulary.

The Mandatory Schema Stack:

-

Organization Schema: To establish your Brand Entity. This should live on your homepage. It defines who you are, your logo, your social profiles, and your contact info. It connects the dots across the web.

-

WebSite Schema: To define the site search action, helping AI agents understand how to query your internal database.

-

Article Schema: Every blog post must have this. It defines the

headline,author,datePublished, anddateModified. Crucially, it helps AI differentiate between news, tech articles, and opinion pieces. -

Breadcrumb Schema: To explicitly define the site’s hierarchy to the bot. Even if your visual breadcrumbs are subtle, the schema tells the bot exactly where it is in the silo.

-

FAQ Schema: To dominate Voice Search and Q&A queries. By wrapping your Q&A sections in FAQ schema, you make that content portable. Google Assistant or ChatGPT can strip that Q&A and serve it directly to the user.

-

CollectionPage Schema: Use this for your category archives. It tells the bot, “This is not an article; this is a list of items related to Topic X.”

By implementing this stack, you achieve API accessibility. You make your content data-portable, allowing AI agents to query your site like a structured database rather than scraping it like a messy text document.

Phase 4: Clear Navigation and Crawl Efficiency

Clear navigation paths are the arteries of your site. If they are clogged with JavaScript, hidden behind clicks, or convoluted, the bot cannot flow.

The Efficiency Protocol:

-

Eliminate Orphan Pages: Every page must be linked from somewhere. An orphan page is a dead neuron. It has no connection to the neural lattice. If an AI finds a page with no incoming internal links, it assumes the page has zero authority and ignores it.

-

Kill Render-Blocking Resources: Speed is critical not just for humans, but for bots. Bots have a time limit. If they have to wait 3 seconds for a heavy JavaScript file to load just to see your navigation menu, they will time out.

-

Text-Based Links: Do not use images or JavaScript buttons for core navigation unless they have proper

alttext andaria-labelattributes. Bots read text. If your menu is a fancy React component that renders client-side, ensure it has server-side rendering (SSR) or a static fallback. -

The Footer is for Structure, Not Stuffing: Use your footer to link to your core pages (About, Contact, Services) and your legal pages. Do not stuff it with 50 keyword-rich links. That is a spam signal.

Phase 5: Optimizing for the “Context Window”

In the age of LLMs, we must consider the Context Window—the amount of text an AI can process at once. While context windows are growing, attention mechanisms still prioritize the beginning and end of documents.

Formatting for Machine Attention:

-

The Inverted Pyramid: Place the most critical information (the definition, the answer, the conclusion) at the very top. Do not bury the lead. This ensures that even if the crawl is partial, the core data is captured.

-

Heading Logic: Your H1, H2, and H3 tags are not just for font size. They form the outline of the data. An AI should be able to read only your headings and understand the entire argument of the article.

-

Topic Density: Ensure your content stays on topic. AI measures “vector distance.” If your article about “Site Architecture” starts rambling about “Instagram Marketing,” the semantic relevance score drops, and the AI classifies the content as low-quality or unfocused.

The Call to Dominance

The era of “designing for humans” is over. We are now designing for Human-AI Hybrids.

The human consumes the content, but the AI delivers it. The AI is the gatekeeper. The AI is the delivery truck. If you ignore the delivery mechanism, the consumer never arrives.

You have a stark choice in 2026.

You can continue to build pretty, unstructured websites that look good in a portfolio but remain invisible to the algorithms that rule the internet. You can let your content rot in a div soup of obscurity.

Or, you can implement an AI-readable site structure today. You can organize your information design, tighten your content hierarchy, and speak the language of the machines that control the flow of information.

Are you ready to build a digital fortress that survives the AI shift, or will you let your website become a relic of the manual web?

The architecture is the strategy. Build accordingly.

Tags: #sitearchitecture, #technicalseo, #aifriendly, #semantichtml, #webstructure, #contenthierarchy, #crawlability, #indexing, #robotfriendly, #informationdesign 1

- Penulis: mbahkatob

Saat ini belum ada komentar